Rpi 4 Cluster - Part 5

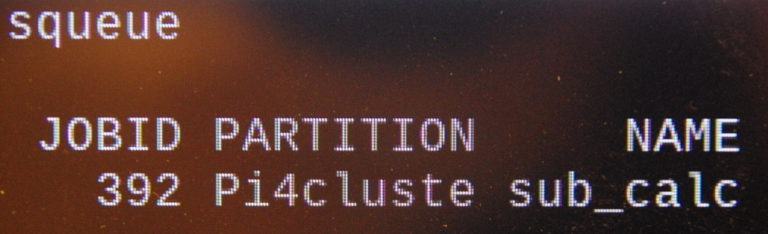

Well, here we are back again, after a few mis-adventures, adventures, and ventures. After getting things back to some semblance of normality, I finally entered the Pi calculation program and finished up Garrett’s Series, Part III: OpenMPI, Python, and Parallel Jobs.

One area I haven’t figured out yet, is with Chrony, even though I fixed one issue, is seems another issue has prevented the nodes from getting the time from the server. Still working on why that is. It is especially frustrating as it was working before.

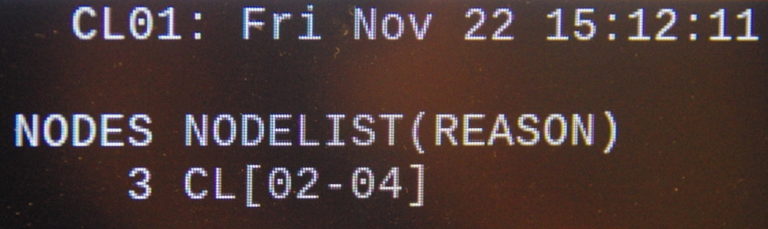

A few catch up notes: I found that nodes were not coming alive like they should, after I wiped out the master. After redistributing the munge, slurm and cgroup files, they still wanted to remain “dead.”

After reading the documentation, I found that by setting the “ReturnToService” option to “2” in the /etc/slurm-llnl/slurm.conf file, the nodes would (sometimes) resurrect themselves.

One area still puzzles me, and I’m not familar enough with munge to figure it out yet. Doing:

ssh pi@node01 munge -n | unmunge

shows ENCODE_HOST as the originating node, not node 1. Seems that before I accidentally wiped out the master, it was showing the node like in Garrett’s tutorial. But, at this late date, I’m not sure if that was truly the case. So that’s still up in the air for now.

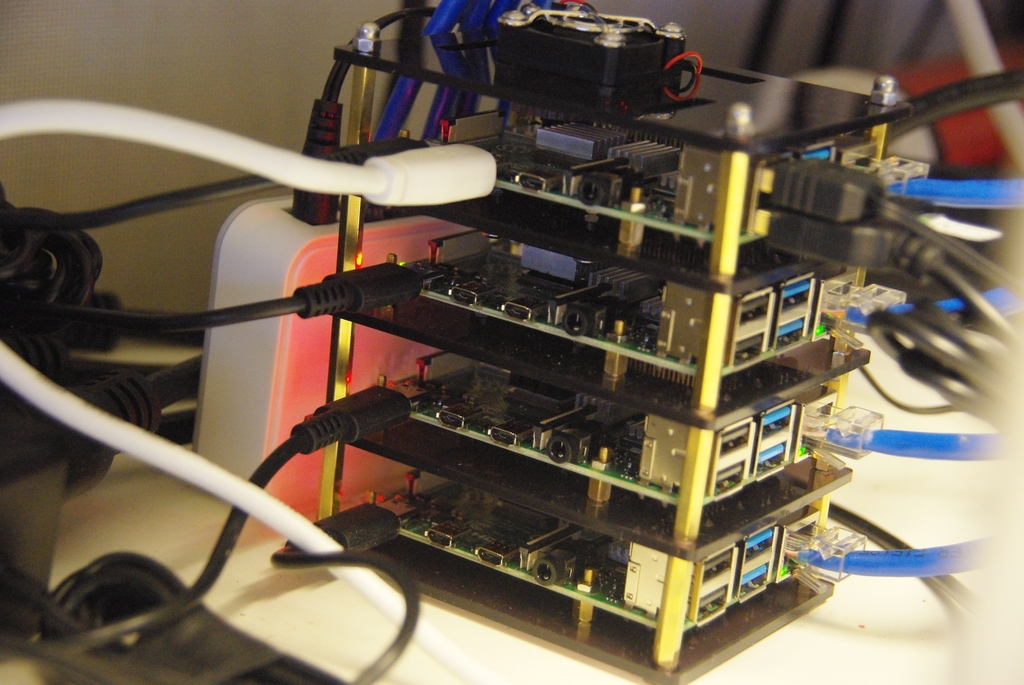

As briefly mentioned in Part 3, the nodes were getting WAY too hot, which was causing them to throttle back, one reason why the Pi calculations were taking around 15 minutes to complete. So I have gone to a different solution as can be seen in the image at the top of this post and here.

This case from GeeekPI has much better airflow; and, each board has its own fan blowing directly on the CPU. This causes the temperature to be much more reasonable; and, seems to have cut the processing time in (about) half.

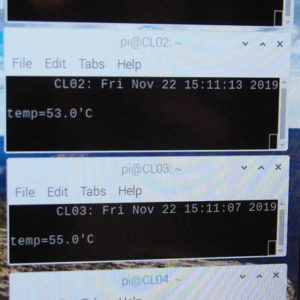

I notice that even with the fans and open stack, some temperature differences are noted, caused by the air being warmer toward the bottom as the airflow is drawn over the bottom of the board above. At least, that’s my theory… Here are what I see as the Pi calculation is being performed:

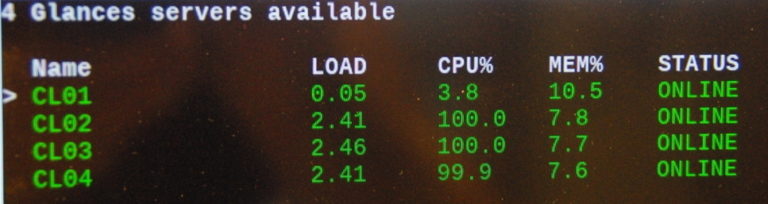

The last image show the loads and CPU usage for each node. Glances is run as a service on each node, so once started it is not necessary to restart; but, they do have to be running. I had started (on each node) “glances -s” in the /etc/rc.local file, then later commented them out, but they were still enabled so loaded at each reboot. So, the control node does not “start them running” on each node.

So I still have several issues, the “chrony” one mentioned earlier, the munge/unmunge issue and temp display in glances. So, that’s where things stand now.

One more little tidbit, The Pi calculation program. Seems python only uses 15 digits of precision for Pi. So, adjusting the number of intervals eventually reaches a point of diminishing returns. But, having said that, seems the ideal number of intervals for the smallest error is (about) 10 trillion and some change. The reason I say (about) is I did not attempt to use non-integer numbers. What I did use is 10 Trillion and 90. That looks like this: _n=10000000000090. Or for readability: 10,000,000,000,090. That allows the program to calculate Pi to 3.141592653589793(1). Changing to 89 or 91 increases the calculated error from the internal Pi Python works with.

So, this is about the end of this series. The cluster is working (for the most part) and can do things. Now comes, like all series I have run across about clusters, the part of determining what to do with it…

And for those who just can’t get enough pi, what with the Holidays approaching… Here’s some more pi for you:

3.141592653589793 238462 643383 279502 884197 169399 375105 820974 944592 307816 4. That’s 70 digits for those folks who might enjoy reading “The Joy of PI.”